Forget GPU Anxiety: Microsoft BitNet Runs LLMs on an Ordinary CPU

Think you need a high-end GPU to run a large language model locally? Microsoft just proved otherwise — with one open-source project.

The Problem We’ve All Been Dealing With

Over the past few years, large language models have grown remarkably capable — but running them locally has only gotten harder:

- Memory explosion: A 7B-parameter model at FP16 precision alone eats up roughly 14 GB of VRAM

- Hardware dependency: Consumer GPUs like the RTX 3060 12G struggle the moment models get any larger

- High power consumption: A single inference run burns through significant electricity — edge devices simply can’t handle it

- Privacy concerns: Sending sensitive data to the cloud is something many users and organizations want to avoid entirely

In short: modern LLMs are too heavy for ordinary people to run.

Quantization has long been the standard workaround — compressing 16-bit float weights down to 8-bit or 4-bit. But that’s still just trimming at the edges, and precision loss is always lurking.

Microsoft’s answer is far more radical: compress weights down to 1.58 bits, right from the start.

What Is BitNet?

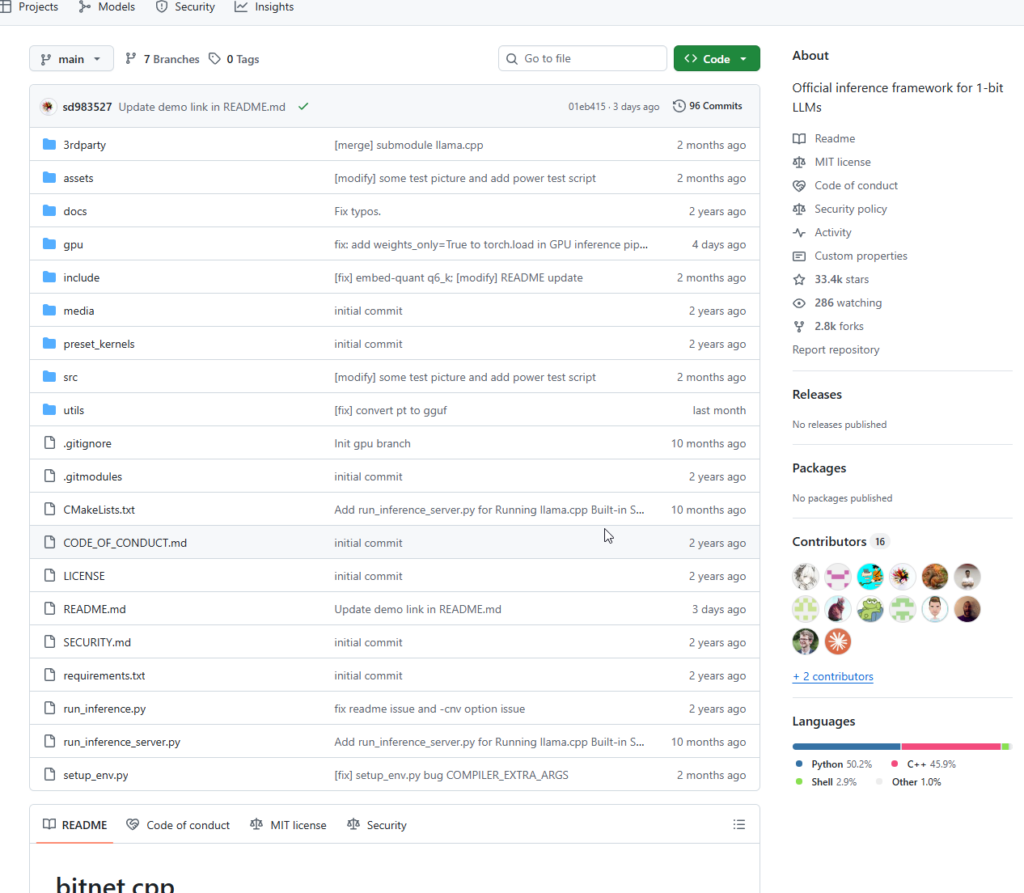

BitNet is Microsoft’s official open-source inference framework for 1-bit large language models. The core project is bitnet.cpp, available on GitHub at microsoft/BitNet.

Its companion model is BitNet b1.58 — the “b1.58” is a mathematical fact: when you represent weights as ternary values (−1, 0, or +1), each weight requires only $\log_2 3 \approx 1.58$ bits on average.

This is not ordinary post-training quantization. It is a 1-bit model trained natively from scratch.

Core Technical Highlights

| Feature | Description |

|---|---|

| Ternary weights | Weights can only be −1, 0, or +1, implemented via absmean quantization |

| 8-bit activations | Ultra-minimal weights paired with 8-bit activation precision for a balance of efficiency and expressiveness |

| Multiplication-free inference | Ternary arithmetic reduces matrix multiplication to additions and subtractions — friendly to CPUs by design |

| Dedicated inference kernels | Deeply customized on top of llama.cpp, further accelerated with T-MAC lookup table techniques |

The flagship model, BitNet b1.58 2B4T (2 billion parameters, trained on 4 trillion tokens), is currently the world’s first open-source native 1-bit large language model, released in 2025.

How Good Is It, Really?

Comparison with Full-Precision Models of Similar Scale

BitNet b1.58 2B4T requires only 0.4 GB of memory for non-embedding weights, while comparable full-precision models typically need 1.4–4.8 GB. Decoding latency on CPU is just 29 ms per token, with energy consumption as low as 0.028 J per inference.

On the GSM8K mathematical reasoning benchmark, BitNet scores 58.38 — surpassing both Qwen2.5 and MiniCPM while using dramatically fewer computational resources.

Inference Performance Gains

On x86 CPUs, BitNet delivers 2.37×–6.17× faster inference and 71.9%–82.2% lower energy consumption compared to full-precision equivalents. A 100B-parameter BitNet b1.58 model can run on a single CPU at roughly 5–7 tokens per second — close to natural human reading speed.

Bottom line: Less than 1/10th the memory of a standard model. Equal or better results.

How to Use It

Requirements

- Python 3.9+

- CMake 3.22+

- Clang 18+ (recommended) or GCC

- Windows users: Visual Studio 2022

Quickstart (5 Steps)

Step 1 — Clone the repository

git clone --recursive https://github.com/microsoft/BitNet.git

cd BitNet

Step 2 — Create environment and install dependencies

conda create -n bitnet-cpp python=3.9

conda activate bitnet-cpp

pip install -r requirements.txt

Step 3 — Download the model

huggingface-cli download microsoft/BitNet-b1.58-2B-4T-gguf \

--local-dir models/BitNet-b1.58-2B-4T

Step 4 — Configure the build environment

python setup_env.py -md models/BitNet-b1.58-2B-4T -q i2_s

Step 5 — Run inference

python run_inference.py \

-m models/BitNet-b1.58-2B-4T/ggml-model-i2_s.gguf \

-p "You are a helpful assistant" \

-cnv

⚠️ One Critical Note

You must use the dedicated bitnet.cpp inference framework to realize the efficiency gains. Loading and running the model directly through HuggingFace Transformers — without the specialized kernels — may result in speed and energy performance no better than a full-precision model, or even worse.

Who Is This For?

Great fit:

- Local knowledge bases and private deployments — data never leaves the machine

- Edge device AI — Raspberry Pi, low-power NAS, industrial controllers

- Developer research — an experimental platform for 1-bit LLM training and inference

- Resource-constrained servers — inference without any GPU at all

Less suitable for:

- Production commercial use — official guidance recommends thorough testing before any business application

- Multilingual scenarios — non-English language support remains limited at this stage

Conclusion

BitNet isn’t a patch or an optimization — it represents a genuine paradigm shift in how large models are designed:

Rather than asking “how do we squeeze a large model into less memory,” the question becomes “what if the model was designed to be lightweight from day one?”

When a 2B-parameter model needs only 0.4 GB of memory and runs smoothly in conversation on an ordinary CPU, the barrier to local AI is no longer the price of a graphics card. Smartphones, routers, industrial controllers — devices that were never “supposed” to run language models may soon be doing exactly that.

Microsoft has opened the door. Larger parameter models, broader multilingual support, NPU acceleration — all of it is on the horizon. The 1-bit era has only just begun.

GitHub: https://github.com/microsoft/BitNet

Model download: Search microsoft/bitnet-b1.58-2B-4T on HuggingFace

Live demo: aka.ms/bitnet-demo