Fish Speech: The Open-Source TTS Ceiling — Clone Any Voice in 10 Seconds

High-quality text-to-speech used to be the exclusive playground of big tech. Now, an open-source project trained on 10 million hours of audio has kicked that door wide open.

1. What Problem Does It Solve?

The high-quality TTS space has long been gated by several stubborn barriers:

1. A glaring quality gap. Open-source solutions have always lagged behind commercial products like ElevenLabs and Azure TTS in naturalness and emotional richness — there has been an unmistakable “listening experience chasm.”

2. High barriers to voice cloning. Cloning someone’s voice traditionally required large amounts of clean recording data or a dedicated fine-tuning pipeline — completely out of reach for most people.

3. Clunky multilingual handling. Traditional TTS relies on phoneme dictionaries and language-specific preprocessing. Switching languages means switching models, and mixed-language output (e.g., Chinese-English code-switching) has always been a notorious pain point.

4. Coarse emotion control. Generated speech could only be tuned through basic parameters like speed and pitch. Asking a model to “say this while laughing” or “whisper this line” was essentially impossible.

Fish Speech tears down all four of these walls at once.

2. What Is Fish Speech?

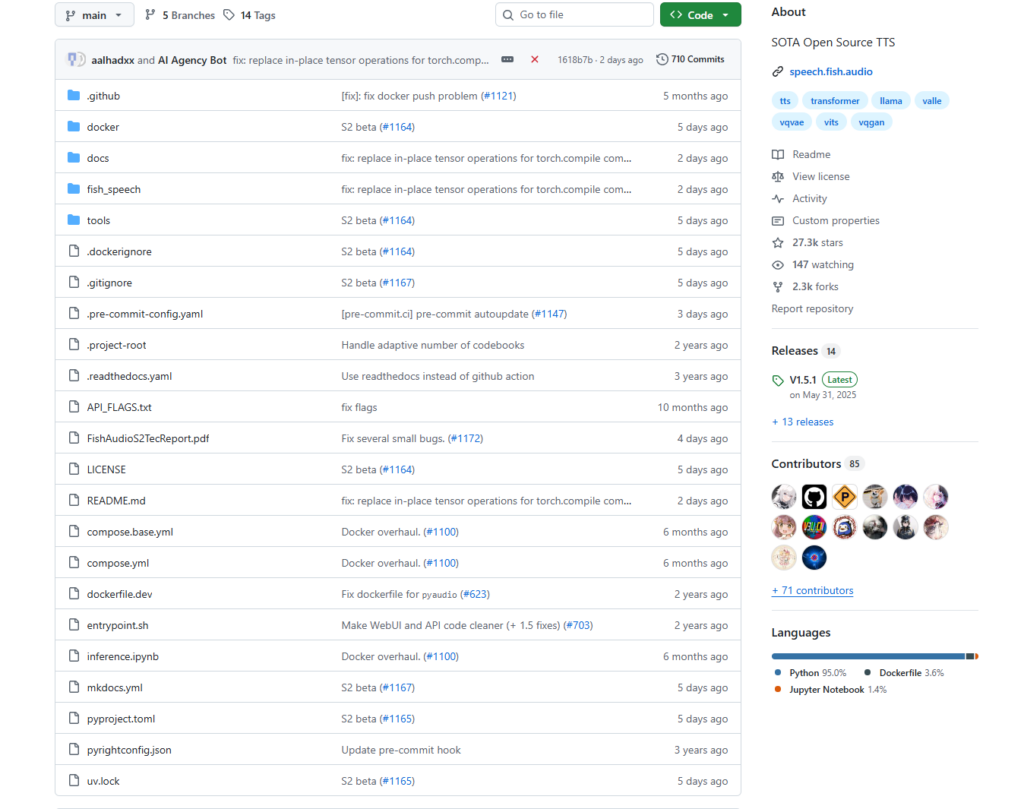

Fish Speech is a state-of-the-art text-to-speech system open-sourced by the Fish Audio team. It has already earned over 27,000 GitHub stars, making it one of the most-watched open-source TTS projects in the world.

The latest release, Fish Audio S2, beats every model in the field — including closed-source commercial systems — across multiple key benchmarks:

| Benchmark | Fish Audio S2 | Best Closed-Source Competitor |

|---|---|---|

| Seed-TTS Eval WER (Chinese) | 0.54% | Qwen3-TTS: 0.77% |

| Seed-TTS Eval WER (English) | 0.99% | MiniMax Speech-02: 0.99% |

| Audio Turing Test | 0.515 | Seed-TTS: 0.417 (24% lower) |

| EmergentTTS-Eval Win Rate | 81.88% | — |

In plain terms: in a listening test designed to tell human speech from AI, more than half of listeners believed Fish Speech S2’s output was a real human recording.

Core Technical Highlights

① Dual-Autoregressive Architecture (Dual-AR)

S2 splits speech generation into two stages: a slow AR (4B parameters) predicts semantic codes along the time axis, while a fast AR (400M parameters) fills in 9 residual codebooks at each time step. This asymmetric design preserves audio fidelity while keeping inference efficient.

② Reinforcement Learning Alignment (GRPO)

S2 uses Group Relative Policy Optimization for post-training alignment. The reward signal combines semantic accuracy, instruction adherence, acoustic quality scoring, and timbre similarity — resulting in more stable, natural output.

③ Natural-Language Emotion Tags

This is S2’s most immediately impressive feature. You can insert free-form control tags anywhere in your text:

Today's news [in a broadcast anchor tone] is here — [laugh] honestly, I have no idea what to say.Tags like [laugh], [whispers], [super happy], or [sad] work in natural language and apply at the word level, giving you precise, expressive control.

④ Zero-Shot Voice Cloning

With just 10–30 seconds of reference audio, S2 can clone a target voice — no fine-tuning or additional training required.

⑤ 50+ Languages, No Phoneme Dictionaries

S2 processes raw text directly, with no dependence on phoneme lexicons or language-specific preprocessing. Chinese, English, Japanese, Korean, French, German, Arabic, and 40+ more languages work out of the box, with seamless mixed-language generation.

⑥ Native Multi-Speaker Generation

Multiple speakers can be generated in a single request, controlled via <|speaker:0|> and <|speaker:1|> tokens — no need to upload separate reference audio per speaker.

3. How to Use It

Option A: Try It Online (Zero Setup)

Visit the official demo at fish.audio. Enter text to hear it synthesized, or upload reference audio to try voice cloning — no installation needed.

Option B: Self-Hosted Local Deployment

Hardware requirements: GPU with ≥ 24 GB VRAM, Linux or WSL environment.

⚠️ Note: The flagship S2 model requires 24 GB of VRAM. Users with less VRAM (e.g., an RTX 3060 with 12 GB) should use the distilled S1-mini (0.5B), available on HuggingFace.

Step 1 — Clone the repository

git clone https://github.com/fishaudio/fish-speech.git

cd fish-speechStep 2 — Install dependencies (Conda method)

# Install system-level audio dependencies

apt install portaudio19-dev libsox-dev ffmpeg

# Create a virtual environment

conda create -n fish-speech python=3.12

conda activate fish-speech

# Install with GPU support (choose your CUDA version: cu126 / cu128 / cu129)

pip install -e .[cu129]Step 3 — Launch the WebUI

# Direct launch

python -m tools.run_webui

# Or via Docker (recommended for production)

docker compose --profile webui upOpen your browser and go to http://localhost:7860 to access the graphical interface for TTS and voice cloning.

Step 4 — API Server (for integration into your own applications)

# Start the API server

docker compose --profile server up

# Accessible at: http://localhost:8080Or use the official Python SDK:

pip install fish-audio-sdkfrom fish_audio_sdk import Session, TTSRequest

session = Session("YOUR_API_KEY") # Get a free key at fish.audio

with open("output.mp3", "wb") as f:

for chunk in session.tts(TTSRequest(text="Hello, world!")):

f.write(chunk)Option C: Full Voice Cloning Workflow

- Prepare 10–30 seconds of clean reference audio (WAV or MP3, no background noise)

- Upload the reference audio in the WebUI

- Enter your target text and click Generate

- Download the output

Three steps. No training. No fine-tuning.

4. Summary

Fish Speech S2 represents the current peak of open-source TTS. It doesn’t just lead on a single metric — it simultaneously matches or surpasses closed-source commercial systems across five dimensions: speech naturalness, emotion control, multilingual support, voice cloning speed, and inference efficiency. And it does all of this while being fully open-source, locally deployable, and free from data-upload privacy concerns.

For content creators, it’s an extremely low-cost professional voice synthesis tool. For developers, it’s a speech engine ready to be plugged directly into production. For researchers, it provides a complete training and fine-tuning pipeline.

The only real barrier is that the flagship S2 model demands significant VRAM (24 GB) for inference. Users with less VRAM can start with S1-mini, or simply call the Fish Audio cloud API.

One-line verdict: The Llama moment for TTS has arrived.

GitHub: https://github.com/fishaudio/fish-speech

Live Demo: https://fish.audio

Documentation: https://speech.fish.audio

License: Fish Audio Research License (contact for commercial use)