The Problem: Why Is Fine-Tuning So Hard?

Fine-tuning a large language model sounds like a dream — take a general-purpose model and turn it into a domain expert for legal documents, customer support, code generation, or anything else you need.

But reality hits hard:

- Massive VRAM requirements: Full fine-tuning a 7B-parameter model demands 40GB+ of VRAM — completely out of reach for consumer GPUs

- Painfully slow training: Even with LoRA and quantization, a single training epoch under the native HuggingFace framework takes forever

- Steep learning curve: From data preparation and model loading to training configuration and export, every step is a potential minefield

- Hardware gatekeeping: Most tutorials assume you have an A100 — if you’re on an RTX 3060, you’re left out

These barriers have locked a technology that should be universally accessible behind a door that only a privileged few can open.

What Is It?

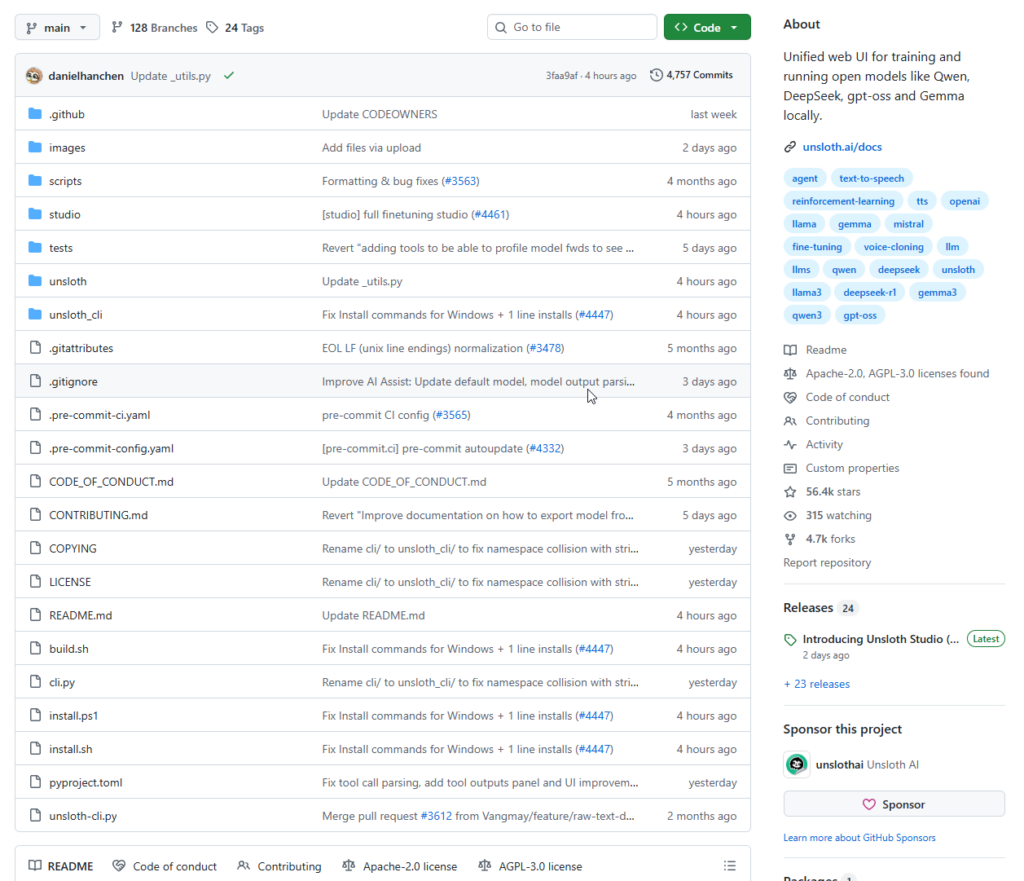

Unsloth is an open-source framework purpose-built for LLM fine-tuning and local inference optimization. Founded by brothers Daniel Han and Michael Han, it launched in 2023 and has since accumulated nearly 50,000 GitHub Stars.

Its mission statement is simple: let anyone fine-tune large models on their own hardware.

All compute kernels are written in OpenAI’s Triton language, paired with a hand-crafted backpropagation engine that delivers speed gains with zero accuracy loss — no approximations, no compromises — without requiring any hardware upgrades.

Key numbers:

- Training speed up to 12× faster on MoE (Mixture-of-Experts) architectures, with 35% less VRAM

- Supports 4-bit and 16-bit QLoRA / LoRA fine-tuning, compatible with bitsandbytes

- Runs on NVIDIA GPUs from 2018 onwards (CUDA Capability 7.0+: RTX 20/30/40 series, V100, T4, A100, H100, and more)

- Compatible with 500+ models including Qwen, DeepSeek, Gemma, Llama, Mistral, and Phi

Beyond fine-tuning, trained models can be exported as safetensors or GGUF format for use directly with llama.cpp, vLLM, Ollama, and other inference runtimes — forming a complete train-to-deploy pipeline.

Core Features

1. Blazing-Fast LoRA / QLoRA Fine-Tuning

This is Unsloth’s flagship capability. Through custom Triton kernels and hand-written backpropagation, it dramatically reduces VRAM usage and accelerates training — without sacrificing any accuracy.

Take an RTX 3060 (12GB VRAM) as an example: fine-tuning Llama-3.1-8B is simply impossible with the native HuggingFace stack. With Unsloth’s 4-bit QLoRA, the same task runs smoothly on the same GPU.

2. Reinforcement Learning (RL) Support

Unsloth supports GRPO reinforcement learning for long-context reasoning, capable of replicating DeepSeek-R1’s “aha moment” with as little as 5GB of VRAM — turning models like Llama, Phi, and Mistral into reasoning-capable systems. For developers exploring o1-style reasoning enhancement, this is one of the lowest-barrier entry points available.

3. Unsloth Studio — Visual Web UI

Unsloth now ships with a unified web UI that lets you run and train open-source models like Qwen, DeepSeek, gpt-oss, and Gemma locally — no coding required. Data preparation, model training, and inference comparison are all handled through the interface.

The Data Recipes feature enables a graphical node-based workflow for converting unstructured documents — PDFs, CSVs, JSON files — into training-ready datasets.

4. Dynamic GGUF Quantization

Unsloth’s Dynamic GGUF 2.0 applies different quantization precision to different layers, striking a better balance between model size and inference quality. It has become one of the most-downloaded sources for quantized models.

5. Multi-Modal and Multi-Task Support

Unsloth supports inference and training across text, audio, embeddings, vision, and TTS model types — far surpassing its original identity as “just an LLM fine-tuning tool.”

How to Use It

Option 1: pip Install

# Install uv (recommended package manager)

curl -LsSf https://astral.sh/uv/install.sh | sh

# Create a virtual environment

uv venv unsloth_env --python 3.13

source unsloth_env/bin/activate

# Install unsloth (auto-detects torch backend)

uv pip install unsloth --torch-backend=auto

Option 2: Launch Unsloth Studio (Web UI)

unsloth studio setup

unsloth studio -H 0.0.0.0 -p 8888

Then open http://localhost:8888 in your browser.

Option 3: Docker

docker run -d \

-e JUPYTER_PASSWORD="mypassword" \

-p 8888:8888 -p 8000:8000 -p 2222:22 \

-v $(pwd)/work:/workspace/work \

--gpus all \

unsloth/unsloth

Option 4: Code-First Fine-Tuning (Most Flexible)

A minimal example fine-tuning Llama-3.1-8B with Unsloth:

from unsloth import FastLanguageModel

import torch

# Load model (4-bit quantized to save VRAM)

model, tokenizer = FastLanguageModel.from_pretrained(

model_name="unsloth/Meta-Llama-3.1-8B-Instruct-bnb-4bit",

max_seq_length=2048,

load_in_4bit=True,

)

# Add LoRA adapters

model = FastLanguageModel.get_peft_model(

model,

r=16, # LoRA rank

target_modules=["q_proj", "k_proj", "v_proj", "o_proj",

"gate_proj", "up_proj", "down_proj"],

lora_alpha=16,

lora_dropout=0,

bias="none",

)

# Continue with TRL's SFTTrainer for training...

After training, export to GGUF for Ollama in one line:

model.save_pretrained_gguf("my_model", tokenizer, quantization_method="q4_k_m")

Free Notebooks (No Local GPU Needed)

Unsloth provides free Google Colab and Kaggle Notebooks that deliver 2× or more fine-tuning speedups on a single NVIDIA GPU — completely free. If you don’t have a local GPU, the official Notebooks are the fastest way to get started. The team maintains over 250 templates covering different models and tasks.

Supported Models

| Family | Representative Models |

|---|---|

| Meta Llama | Llama 3.1 / 3.2 / 3.3 / 4 |

| Alibaba Qwen | Qwen2.5 / Qwen3 / Qwen3-Coder |

| DeepSeek | DeepSeek-R1 / DeepSeek-V3 / DeepSeek-OCR |

| Gemma 2 / 3 | |

| Microsoft | Phi-4 / BitNet |

| Mistral AI | Mistral / Mistral Small |

| OpenAI | gpt-oss (open-source version) |

| Moonshot AI | Kimi K2 / K2.5 |

Summary

Unsloth solves a fundamental democratization problem: bringing LLM fine-tuning capability — once the exclusive domain of well-resourced organizations — within reach of consumer-grade GPUs.

Its core value comes down to three things:

- Extreme efficiency: Speed and VRAM improvements come from ground-up kernel rewrites, not parameter tweaks — they are systemic, not incidental

- Zero accuracy loss: Unlike some acceleration approaches that rely on approximations, Unsloth guarantees training results identical to the original

- Complete toolchain: From dataset construction and model training to RL fine-tuning, quantized export, and local deployment — one framework covers the entire workflow

For developers who have a consumer GPU (RTX 3060 or better) and want to fine-tune open-source models for a specific domain, Unsloth is the most compelling framework to reach for first.

GitHub: https://github.com/unslothai/unsloth Documentation: https://unsloth.ai/docs Official Notebooks: https://github.com/unslothai/notebooks