What Problem Does It Solve?

Over the past two years, AI-assisted programming has evolved through three distinct stages: code completion → IDE Copilot → long-running autonomous agents in the cloud.

That final stage has quietly been reached by some of the world’s leading tech companies:

- Stripe has Minions

- Ramp has Inspect

- Coinbase has Cloudbot

Each built their system independently, yet all converged on nearly identical architectures: isolated cloud sandboxes, carefully curated toolsets, Slack-based triggering, Linear/GitHub context injection, and sub-agent orchestration.

The problem? These are all internal systems — unavailable to anyone outside those companies.

LangChain identified this gap and built Open SWE: an open-source distillation of these architectural patterns that any engineering team can deploy for themselves.

What Is Open SWE?

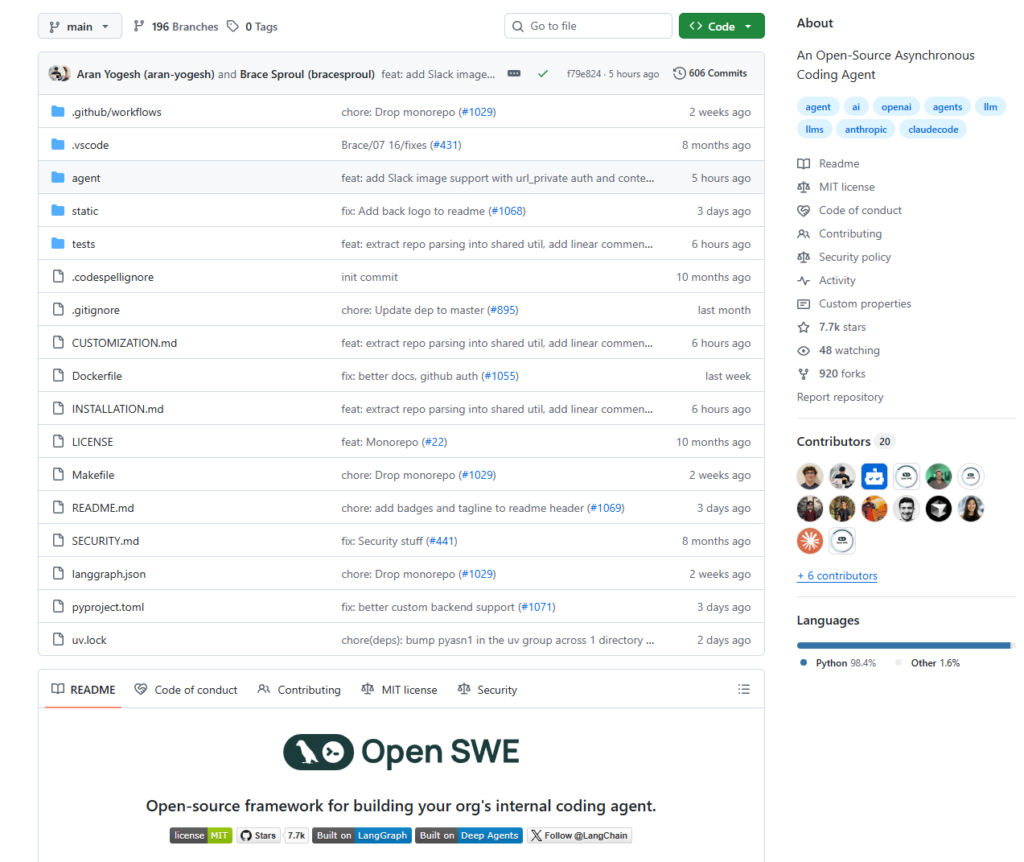

Open SWE is an open-source, cloud-based, asynchronously running coding agent framework built on LangGraph and Deep Agents, released under the MIT license.

It’s not an IDE plugin or a conversational autocomplete tool. It’s designed to work like a real teammate: you hand it a task, it studies the codebase, draws up an execution plan, writes code, runs tests, self-reviews, and opens a PR for you.

Core Architecture

Open SWE uses a multi-agent pipeline design:

Manager (task coordination)

↓

Planner (execution planning, with optional human-in-the-loop approval)

↓

Programmer (writes code and runs tests inside an isolated sandbox)

↓

Reviewer (validates output, then opens a PR)

Key features:

- Isolated sandbox execution: Supports multiple sandbox backends including Modal, Daytona, Runloop, and LangSmith. Each task runs in its own isolated environment — nothing touches your local machine.

- Sub-agent orchestration: Complex tasks can be broken down and handed off to multiple sub-agents running in parallel, each with independent context to avoid cross-contamination.

- Persistent threads: Multiple messages within the same GitHub Issue or Slack thread are routed to the same running agent instance, so you can inject follow-up instructions mid-task.

- Claude Opus 4 by default, with support for any LLM provider.

- AGENTS.md support: If a repository contains an

AGENTS.mdfile at its root, Open SWE automatically injects it into the system prompt.

Trigger Methods

Open SWE integrates directly into existing developer workflows rather than demanding a context switch to a new tool:

- Slack: Mention the bot in any thread using

repo:owner/namesyntax to specify the target repository. The agent replies with progress updates and a PR link directly in the thread. - Linear: Mention

@openswein an issue comment. The agent reads the full issue context, acknowledges with 👀, and posts results as a follow-up comment when done. - GitHub: Mention

@openswein comments on agent-created PRs to handle review feedback and push revisions.

How to Use It

Option 1: Hosted Version (Fastest)

Visit swe.langchain.com, connect your GitHub account, enter your Anthropic API key in settings, and start creating tasks immediately.

Option 2: Self-Hosted

1. Clone the repository

git clone https://github.com/langchain-ai/open-swe

cd open-swe

2. Follow the Installation Guide

- Create a GitHub App (for repository authorization and PR operations)

- Configure LangSmith (for agent observability and sandboxing)

- Set up your preferred triggers: Linear, Slack, and/or GitHub webhooks

3. Core code example

Spinning up a Deep Agent is straightforward:

from deep_agents import create_deep_agent

agent = create_deep_agent(

model="anthropic:claude-opus-4-6",

system_prompt=construct_system_prompt(repo_dir, ...),

tools=[http_request, fetch_url, commit_and_open_pr, linear, ...]

)

Built-in tools include: read_file, write_file, edit_file, ls, glob, grep, write_todos (structured task planning), and task (sub-agent invocation).

4. Customization

Open SWE ships with a comprehensive Customization Guide covering:

- Sandbox backends: Swap in your internal container platform

- Models: Use different models for different sub-tasks

- Triggers: Integrate with internal messaging systems

- Toolsets: Add tools that access internal APIs

- Middleware: Add CI checks, code review gates, and more

What Is It Good For?

Well-suited for:

- Multi-file refactoring

- Bulk test generation

- Dependency upgrades

- Documentation generation

- Feature scaffolding

Less suitable for:

- Small single-line fixes (planning overhead outweighs the benefit)

- Tasks requiring intensive real-time interaction

Summary

Open SWE has a clear mission: democratize the internal AI coding agent architectures that Stripe, Ramp, and Coinbase each built quietly behind closed doors.

AI’s role in software engineering is evolving from autocomplete and IDE Copilots toward autonomous agents that run for extended periods in the cloud — and Open SWE is the first systematic open-source reference implementation of that direction.

Its value isn’t in clever prompting. It lies in presenting the complete architecture required to make an AI coding agent production-ready: isolated sandboxes, sub-agent orchestration, deep integration with developer workflows, and full observability.

For engineering teams that want to deploy their own internal coding agent without building from scratch, Open SWE is the most complete starting point available today.

Repository: https://github.com/langchain-ai/open-swe ⭐ 5.3k | MIT License | Actively maintained